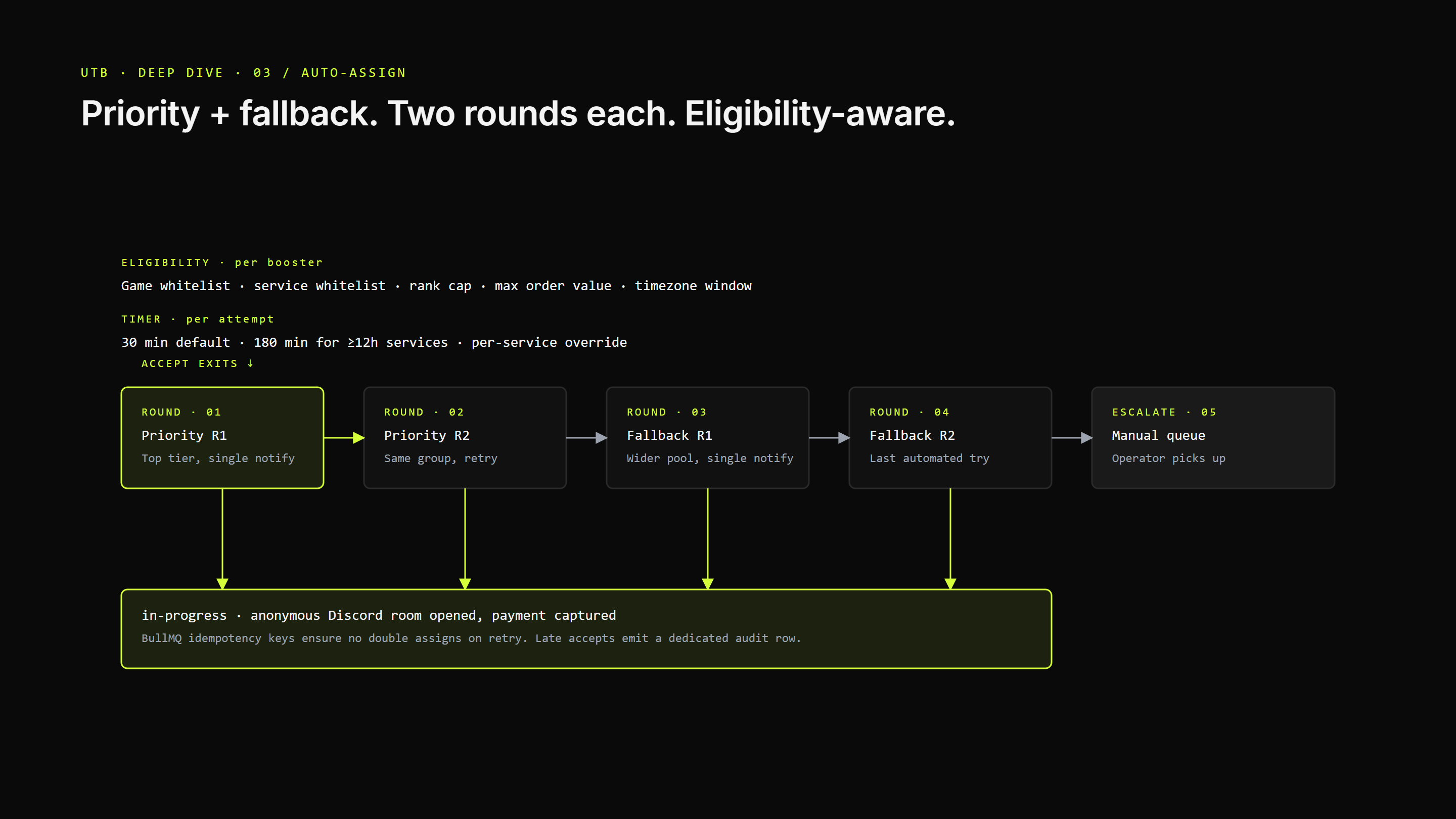

Boosters split into priority and fallback groups per service. Within a group, the worker notifies one pro at a time — never broadcasts. Each group gets two rounds. Default per-attempt timer is 30 minutes; long-duration services (≥12h) get 180, rush gets 30, individual services can override. If everyone passes, the order escalates to manual-assignment-required and an operator picks it up. BullMQ idempotency keys make retries safe; no double assigns. Eligibility is layered: Batch-8 strict whitelists (game / service / rank-tier / max order value), legacy service eligibility, time-of-day window in the booster's timezone, and a concurrent-load cap (default 3). Late accepts after timeout get a dedicated audit row for admin review.

- Priority R1 → Priority R2 → Fallback R1 → Fallback R2 → manual.

- Sequential single-booster notifications — no auction noise.

- Per-booster game / service / rank-tier / max-order-value whitelists.

- Service-level timeout override; 30-min default; 180-min long-duration heuristic.

- Late-accept admin signal as its own audit event.

- Deep dive · 01

System architecture

Four apps, one monorepo, typed end-to-end. Six services in compose; a shared contracts package as source of truth.

- Deep dive · 02

Order state machine

Nine DB statuses; six surfaced to customers. Every transition emits a typed audit event.

- Deep dive · 04

Anonymous Discord ops rooms

Discord is the ops UI. Per-order private channels named from a UUID hex. Reaction-driven ratings. Discord-as-admin-console.

- Deep dive · 05

Stripe pricing parity + rewards

Shared pricing module so FE / BE / Stripe agree to the cent. 3% processor fee as a transparent line item.

- Deep dive · 06

Operator console + live SSE

Calm, keyboard-first, audit-first. Real-time updates via Redis pub/sub → SSE; engine pause / resume / start as first-class controls.

Got something

this size?

Big ambitions, we match the energy. Drop a brief — reply within one working day.